Software

Simulation

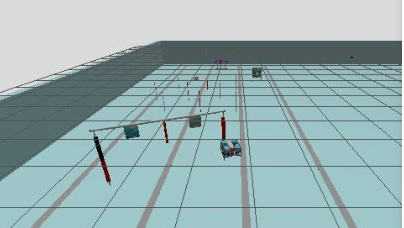

To optimize testing time in the pool, a simulation of the course was created using the Gazebo software. This allows us to test the state machine logic and vision algorithms without needing to be physically in the pool.

This simulation is essential in helping us achieve our goals.

Vision

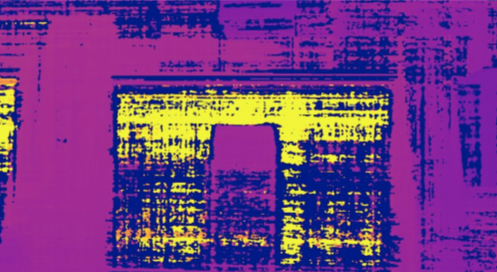

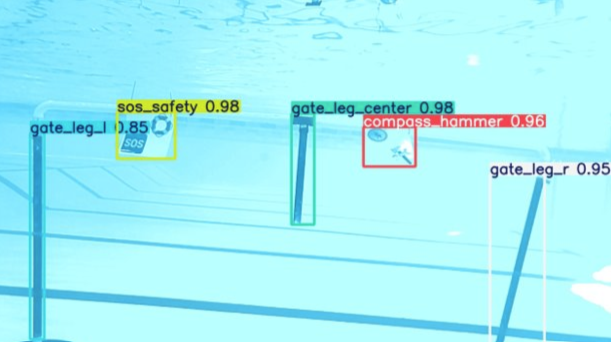

To detect different objects underwater, PIGEON is equipped with two cameras: a forward-facing stereo camera and a downward-facing camera to identify objects beneath the submarine.

A YOLO (You Only Look Once) detection algorithm is then used to identify objects during missions. Vision algorithms for estimating object angles and depths are also integrated to ensure smooth and accurate control of the submarine.

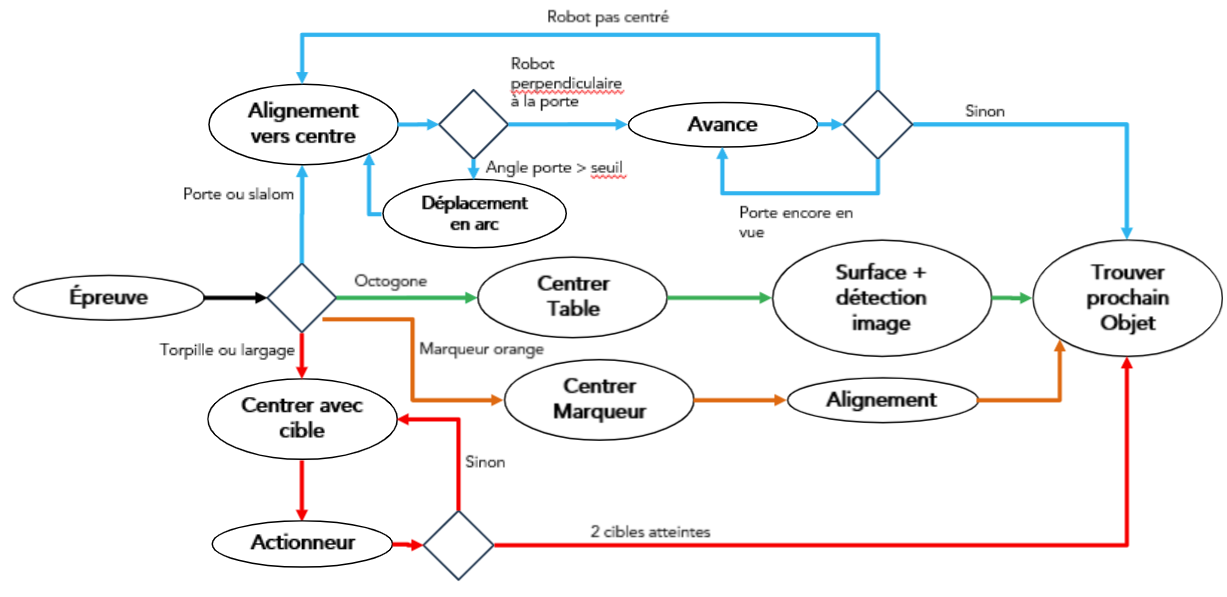

State machine

A custom decision tree was developed. The Nautilus team first identified the tasks they wanted to complete (Gate, Slalom, Torpedo, and Dropper), which then allowed them to determine an optimal path for PIGEON.

The decision tree can be seen in the diagram shown alongside.